Wan 2.7 Video Review: More Control, Less Reroll, and a Better Fit for Directed Short Video Work

- 1. Quick comparison table for this Wan2.7 Video review

- 2. What Wan 2.7 actually is - and why this release matters

- 3. How Wan 2.7 should be evaluated

- 4. The most useful upgrade: less reroll, more control

- 5. Why Wan 2.7 works best when you start from references

- 6. What Wan 2.7 still does not solve

- 7. Best use cases - and who should probably skip it

- 8. Wan 2.6 vs Wan 2.7 - should you actually upgrade?

- 9. Where Wan 2.7 fits in a real workflow

- 10. FAQ

- 11. Final verdict

A lot of launch-week coverage made Wan 2.7 sound like a dramatic turning point.

That is only partly true.

The more useful reading is simpler: Wan 2.7 matters because it pushes AI video toward more control, stronger reference-led workflows, and less wasted rerolling. Not because it suddenly solves every hard part of video production.

That distinction matters.

If you are evaluating this model for actual work - short ads, product clips, character-led scenes, social content, previs, or stylized promos - the real question is not whether Wan 2.7 can make one impressive demo. The real question is whether it gives you more direction before generation and fewer headaches after it.

1. Quick comparison table for this Wan2.7 Video review

| Model / category | Best for | What stands out | What still feels limited | My take |

|---|---|---|---|---|

| Wan 2.7 | Directed short-form video work | Better endpoint control, reference-led workflows, broader creation stack | Still short-form, still generation-first, still early in the release cycle | Stronger as a workflow model than as a pure "wow sample" model |

| Older Wan-era prompt-first usage | Basic cinematic ideation | Good for rough concept generation | Weaker when you need transitions, guided endpoints, or structured revision | Fine for exploration, less persuasive for revision-heavy work |

| One-shot prompt-first AI video tools | Fast experimentation | Easy to try and share | More rerolls, less directed control, weaker shot planning | Good for discovery, less useful once constraints matter |

The most useful test here is not image quality alone.

It is whether the model gives you a cleaner path from intent to usable clip.

See the Wan 2.7 video model on GoEnhance

2. What Wan 2.7 actually is - and why this release matters

Wan 2.7 is better understood as a video creation stack than as one narrow prompt-to-video update.

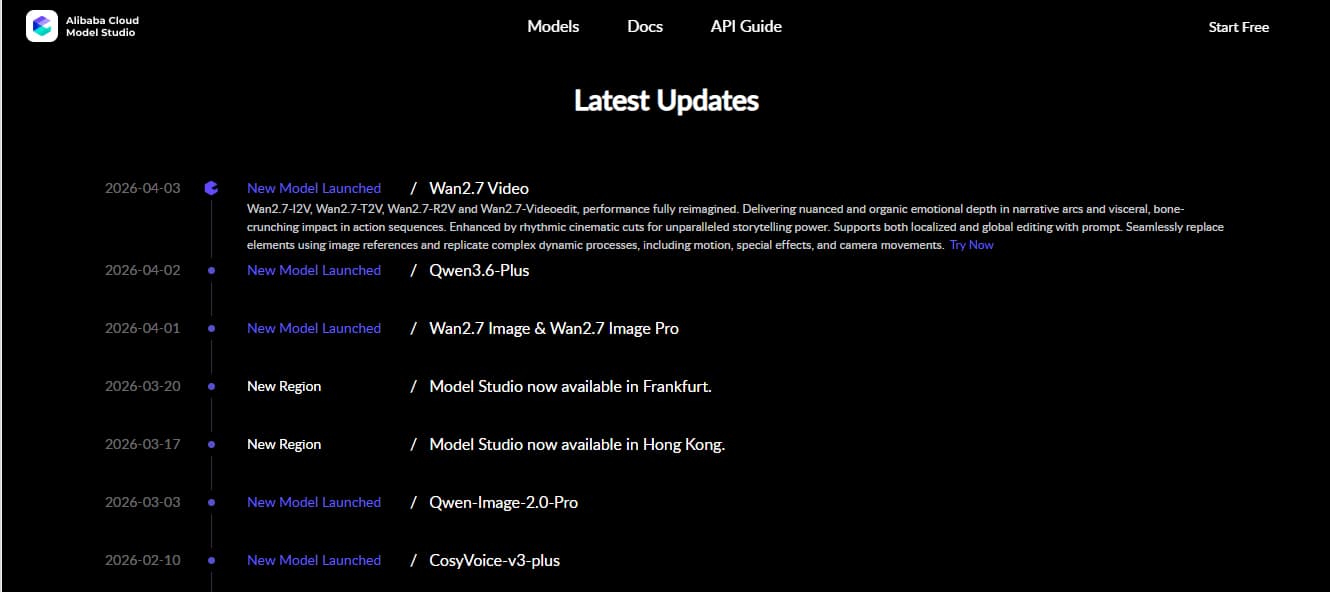

Alibaba Cloud Model Studio's April 3 update explicitly lists Wan2.7-I2V, Wan2.7-T2V, Wan2.7-R2V, and Wan2.7-Videoedit, which immediately tells you this is not a one-lane release. It is a broader family built around generation, reference, and editing logic rather than a single showcase feature.

That matters because most AI video frustration does not come from the first output.

It comes from the second and third attempt.

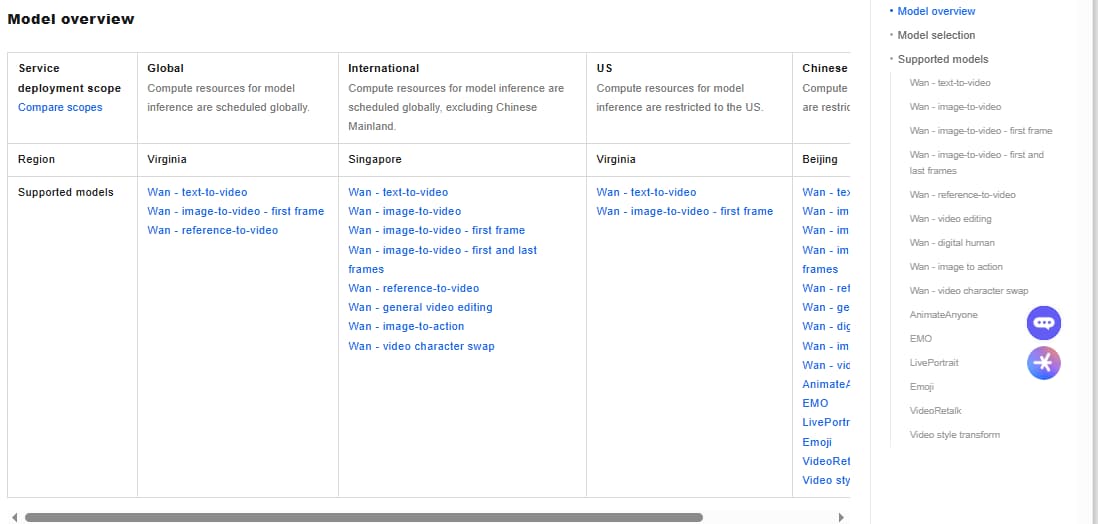

The official video generation overview reinforces that framing. Instead of presenting only one "make a video from text" route, it separates the current Wan video stack into text-to-video, image-to-video from a first frame, image-to-video from first and last frames, reference-to-video, and general video editing. That is a much more practical way to think about the model.

For readers comparing tools in 2026, this is the part worth paying attention to: Wan 2.7 is not just trying to be more cinematic. It is trying to be more directable.

Alibaba Cloud Model Studio's April 3 update is the cleanest official source for the release scope, and Alibaba Cloud's video generation model guide is the clearest official source for how that broader Wan workflow is currently framed.

Bottom line: Wan 2.7 is interesting because it expands how you can direct a video, not just how you can prompt one.

3. How Wan 2.7 should be evaluated

The wrong way to review Wan 2.7 is to ask whether one output frame looks beautiful.

That test is too easy. And honestly, too shallow.

The better test is whether the model gives you more control over the parts that usually break: shot transitions, start-to-end motion logic, consistency when using references, and the ability to steer a sequence without throwing the whole thing away.

This is where a lot of launch content drifts into marketing language. Phrases like "director era" or "AI directing system" sound big, but they do not help much unless you translate them into actual workflow value.

In practice, a model becomes more useful when it lets you:

- guide how a shot begins

- influence how it should land

- rely on source material more heavily

- reduce the number of full reruns needed to get a usable clip

That is the lens that makes Wan 2.7 worth reviewing seriously.

Bottom line: The right Wan 2.7 review question is not "Does it look good?" but "Does it reduce wasted retries?"

4. The most useful upgrade: less reroll, more control

This is the part I would put first in any serious evaluation.

Not the hype. This.

Alibaba Cloud's official first-and-last-frame guide says the Wan model can generate a smooth video from a first-frame image, a last-frame image, and an optional prompt, with a fixed 5-second duration and resolution options at 480P, 720P, and 1080P. That sounds simple on paper, but it changes the review logic quite a bit.

Because once you can define both endpoints of a shot, you are no longer asking the model to "make something cool."

You are asking it to bridge two known visual states.

That is a much stronger production use case for product reveals, before-and-after transformations, short brand ads, stylized transitions, and character-driven beats where the opening and closing visual matter more than raw randomness.

It also matches how the current Wan 2.7 Video page on GoEnhance frames the release: stronger control, better motion, and more deliberate shot direction, not just another text box with prettier samples.

Alibaba Cloud's first-and-last-frame guide is the strongest official source for this part of the workflow.

Bottom line: The real value of Wan 2.7 is not that it can generate a clip, but that it gives you more control over how that clip starts and ends.

5. Why Wan 2.7 works best when you start from references

Wan 2.7 looks strongest when the workflow does not begin from a blank prompt.

That is one of the clearest themes across the official model framing and the current product positioning around the release.

The official video generation overview does not only emphasize text-to-video. It also keeps reference-to-video, first-frame workflows, and first-and-last-frame workflows close to the center of the product map. That matters because it suggests Alibaba is not positioning Wan as a pure prompt playground. It is positioning Wan as a system that benefits from source material, structure, and directed intent.

That is also the most convincing way to use it.

If you already know the character design, product look, scene tone, or shot destination, Wan 2.7 becomes more valuable. The model has less freedom to wander and more information to work with. That usually means fewer rerolls and clearer creative intent.

This is why Wan 2.7 feels more persuasive for:

- product demos

- character-led short clips

- previs and storyboard-like sequences

- stylized social ads

- brand scenes where identity and tone matter

It feels less persuasive as a "just type anything" machine.

Try a reference-led image-to-video workflow

Bottom line: Wan 2.7 is most useful when you guide it with references, not when you leave it alone with a broad prompt.

6. What Wan 2.7 still does not solve

There are still limits here.

Important ones.

Alibaba Cloud's official text-to-video guide says the Wan text-to-video model supports multimodal input including text, images, and audio, and generates videos up to 15 seconds long at 1080P resolution. That is useful. It is also a reminder that this is still a short-form generation system, not a complete production environment.

The same goes for the first-and-last-frame workflow: the official guide describes it around a fixed 5-second duration. That is powerful for transitions and tightly structured clips, but it is not the same thing as a full editing timeline or a long-form storytelling environment.

So yes, Wan 2.7 looks stronger than a lot of launch-week summaries make it sound.

But no, it does not eliminate the normal boundaries of AI video work:

- clip length still matters

- workflow structure still matters

- revision strategy still matters

- not every creative problem should be solved with another generation pass

Alibaba Cloud's text-to-video guide is the best official source for the short-form ceiling and the current resolution framing.

Bottom line: Wan 2.7 is a stronger short-form control model, not a shortcut past the real limits of AI video production.

7. Best use cases - and who should probably skip it

Wan 2.7 makes the most sense for teams and creators who already know roughly what they want.

That includes:

- short ads with clear start and end states

- product videos with controlled motion

- character-led scenes with stronger direction

- storyboard-style planning

- reference-based social clips

- previs work where structure matters more than final polish

These use cases fit the current official model framing much better than open-ended experimentation does.

Who should be cautious?

People whose work depends on exact manual timing, dense motion graphics, typography-heavy layout, or detailed timeline control. Wan 2.7 may help generate material for those workflows, but it does not replace the rest of the production stack. A generation-first model with broader control is still not the same thing as a traditional editor.

That is worth saying clearly.

Because this is where a lot of AI video reviews get too generous.

Bottom line: Wan 2.7 is strongest for directed short clips and weaker as a replacement for hands-on post-production workflows.

8. Wan 2.6 vs Wan 2.7 - should you actually upgrade?

If your main complaint about older Wan-era usage was that it was too open-ended, Wan 2.7 looks like a meaningful upgrade.

If your only goal is "make the frame prettier," the case is less dramatic.

What changes the equation is not just quality talk. It is the broader creation map now wrapped around the model family: text-to-video, first-frame workflows, first-and-last-frame workflows, reference-to-video, and general video editing all sit in the same official product framing. That gives Wan 2.7 a more practical identity than a typical prompt-first release.

So the better upgrade question is this:

Do you want a model that gives you more ways to steer a clip?

If yes, Wan 2.7 is easier to justify.

If not, the upgrade story becomes softer.

Bottom line: Upgrade to Wan 2.7 if your real bottleneck is control, not just image quality.

9. Where Wan 2.7 fits in a real workflow

The strongest place for Wan 2.7 is inside a directed short-video workflow.

Not as the only tool.

Not as a universal answer.

As one useful engine in a larger process.

That is also why the current GoEnhance positioning makes sense. The public Wan 2.7 page is not framed like a random model announcement. It is framed like a model you select when you need more intentional motion, stronger reference-led creation, and better shot control than a looser generation path usually gives you.

That is the right frame.

Use Wan 2.7 when you already know enough about the shot to constrain it well. Use it when you care about transition logic, scene destination, or source-guided consistency. Use it when reducing rerolls matters.

Do not use it as an excuse to avoid thinking about structure.

Bottom line: Wan 2.7 works best when the creative direction already exists and the model's job is to carry it through.

10. FAQ

Is Wan 2.7 better than Wan 2.6?

It looks more useful, not just newer. The stronger case for Wan 2.7 is broader control and a more practical workflow framing, especially around directed short-form generation.

Does Wan 2.7 support first-and-last frame control?

Yes. That is one of the clearest officially documented workflow upgrades associated with the current Wan video stack.

What is Wan 2.7 best for?

It looks best for short, directed video tasks where references, start-and-end control, or stronger shot planning matter.

Is Wan 2.7 good for professional work?

It can be, especially for short-form commercial, social, or previs-style workflows. But it still works best inside a broader production process, not as a complete replacement for every tool.

Is Wan 2.7 a full editing replacement?

No. It is more controllable than a typical prompt-only model, but that does not make it a full traditional editing environment.

Bottom line: Wan 2.7 is worth serious testing for directed short video work, but it should still be judged as part of a workflow, not as a magic replacement for one.

11. Final verdict

Wan 2.7 is not most interesting when it is described as a revolution.

It is most interesting when it is described accurately.

This looks like a stronger AI video model because it gives creators more ways to direct a result, more structure around short-form creation, and a clearer reason to rely on references instead of hoping one loose prompt gets everything right.

That is a practical upgrade.

And in AI video, practical upgrades usually matter more than dramatic headlines.