Wan 2.7-Image Review: Why This Image Model Looks More Useful Than Most

- Wan 2.7-Image Review: Why This Image Model Looks More Useful Than Most

- 1. Quick comparison table for this Wan 2.7-Image review

- 2. What Wan 2.7-Image actually gets right — and why that matters more than “better quality”

- 3. How Wan 2.7-Image should be evaluated

- 4. Character control is one of the strongest reasons to care about Wan 2.7-Image

- 5. Color control is where Wan 2.7-Image starts feeling useful for real image work

- 6. Text rendering, formulas, and layouts may be the model’s biggest differentiator

- 7. Editing is one of Wan 2.7-Image’s biggest strengths — and still the place to stay cautious

- 8. Best use cases — and who should probably skip it

- 9. Where Wan 2.7-Image fits in a real image workflow

- 10. FAQ

- 11. Final verdict

Wan 2.7-Image Review: Why This Image Model Looks More Useful Than Most

Wan 2.7-Image is worth reviewing because it appears to improve control in ways that matter more than another jump in image prettiness.

That is the real question. Not whether it can make a beautiful sample on a good day, but whether it can keep identities separate, obey a color system, render dense text, and edit one part of an image without damaging the rest. Based on Alibaba’s release materials and the early scenario-based testing already circulating around this launch, Wan 2.7-Image looks less like a pure “wow” model and more like a model built for image work that has to stay usable after generation.

1. Quick comparison table for this Wan 2.7-Image review

Wan 2.7-Image stands out most when control matters more than visual surprise.

| Model | Best for | What it does unusually well | What still breaks | Learning curve | My take |

|---|---|---|---|---|---|

| Wan 2.7-Image | Controllable image generation and editing | Faces, palette control, long text, targeted edits | Complex edits can still feel uneven | Medium | One of the most workflow-friendly image models here |

| Midjourney | Stylized image generation | Taste, atmosphere, visual surprise | Fine control and text-heavy layouts | Low to medium | Great for exploration, weaker for precision |

| Flux-style models | Prompt-following and realism | Clean prompt response and strong detail | Brand consistency and editing vary by setup | Medium | Strong generalists, less specialized for layout and text |

| Recraft | Design-oriented visuals and graphic assets | Structured outputs and cleaner design language | Less flexible for broad photographic directions | Low to medium | Better for some design jobs than art-heavy prompting |

| Traditional design tools | Layout-first visual work | Precise manual composition and typography | No built-in generation | Medium | Still better for final static layout polish |

Bottom line: Wan 2.7-Image is not the most exciting model here for surprise value, but it may be one of the most practical when precision matters.

2. What Wan 2.7-Image actually gets right — and why that matters more than “better quality”

Wan 2.7-Image matters because it targets the parts of image generation that usually break real workflows.

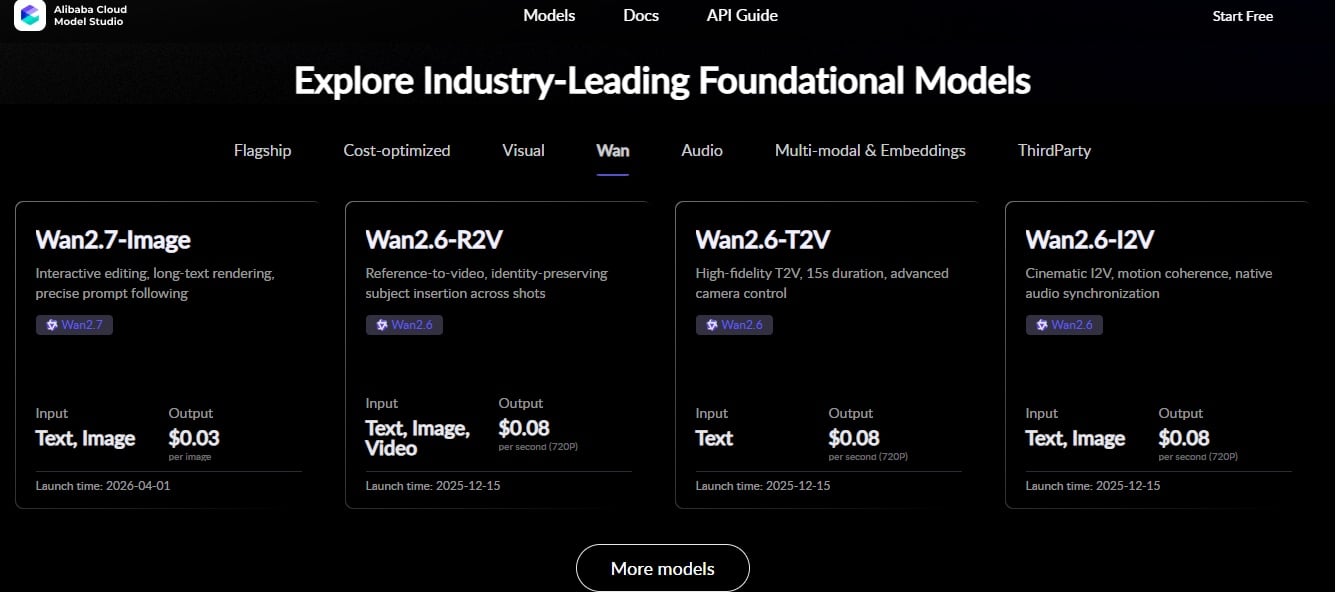

Alibaba’s launch framing is revealing. The emphasis is not just “better pictures.” It is character customization, palette control, long-text rendering, and click-to-edit. The official announcement says the model launched on April 1, 2026 with those control-focused upgrades. That is a more serious positioning than a typical image-model release.

Teams are rarely blocked because AI images are ugly. They are blocked because six people in one frame start looking related, the “brand color” comes back louder than requested, the poster headline turns into nonsense, or one small correction rewrites the entire image. Those are not glamorous failures. They are production failures.

That is why Wan 2.7-Image is more interesting as a control-focused image model than as a pure aesthetics model.

Bottom line: The real story here is not image quality alone. It is whether Wan 2.7-Image wastes less intent than older image workflows.

3. How Wan 2.7-Image should be evaluated

Wan 2.7-Image should be judged like a production tool, not like a prompt lottery.

A launch like this is easy to misread if the evaluation starts from “what is the prettiest sample?” That is the wrong test. The right test is stress on the tasks that usually expose weak controllability:

- multi-person identity separation across 4, 6, and 8 subjects

- same-character edits, where one facial trait changes but the rest should stay stable

- palette-driven generation from a reference color image

- dense text rendering, including long Chinese text and formula-heavy layouts

- box-based local editing, where only one region should change

- multi-reference consistency across a controlled composition

That evaluation logic matches what Alibaba is highlighting in the release and what the model listing in Model Studio describes as interactive editing and multi-image reference generation. It also matches the most useful early hands-on scenarios already being shared around this launch.

That matters because a strong image model should not only produce a striking frame. It should hold together when the brief gets specific.

Bottom line: Wan 2.7-Image looks most promising when you test it against controllability, not just aesthetics.

4. Character control is one of the strongest reasons to care about Wan 2.7-Image

Wan 2.7-Image looks strongest when the job requires multiple distinct people or targeted identity edits without collapsing the whole image.

This is one of the oldest weak points in AI image generation. Ask for a group and the faces start converging. Ask for a specific change to one subject and the image begins drifting elsewhere. Early testing around Wan 2.7-Image suggests it performs unusually well in both of those situations: keeping multiple people visually distinct, and modifying one subject’s facial or styling details without destroying the rest of the composition.

That is more valuable than it sounds. Character control matters for campaign variations, e-commerce lifestyle shoots, recurring talent, brand mascots, educational illustrations, and any visual system that needs more than one “pretty one-off.” It also matters for trust. If a model cannot preserve identity logic, it becomes hard to build repeatable visual work on top of it.

What makes this more interesting is that Wan 2.7-Image is not only framed as a generator. It is framed as a generator that can keep instruction stacks cleaner than usual. That is a major difference.

Bottom line: If your work involves repeated characters or group scenes, Wan 2.7-Image addresses one of the most annoying old failures in AI image generation.

5. Color control is where Wan 2.7-Image starts feeling useful for real image work

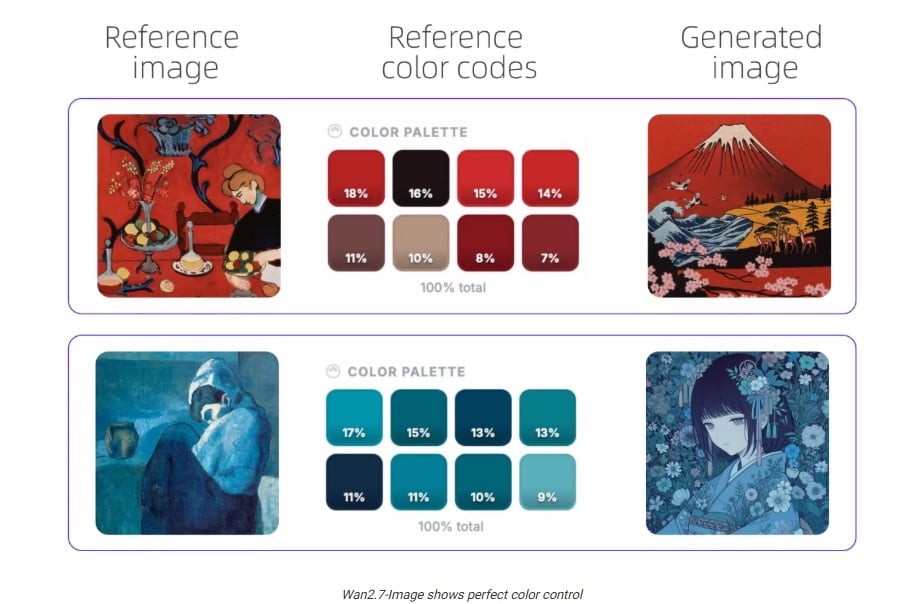

The color palette feature is one of the clearest signs that Wan 2.7-Image is trying to solve real visual production problems.

Color drift is a bigger issue than many reviews admit. A model can follow the nouns in a prompt and still miss the mood completely. Ask for dusty, muted tones and get loud candy color. Ask for dark cinematic restraint and get bright lifestyle gloss. That is not a minor miss. In campaign work, poster work, and branded image systems, that is the entire job going off-track.

Wan 2.7-Image appears to treat palette as structure rather than decoration. If you are specifically looking at Wan 2.7-Image color control, this is the feature to watch. The promise is not vague “style matching.” It is closer to extracting usable tonal relationships from a reference and keeping the image inside that logic.

That is exactly the kind of capability that makes an image model more valuable for design-adjacent work. It is also why Wan 2.7-Image feels closer to a serious AI Image Generator workflow than a one-off prompt experiment.

Bottom line: Wan 2.7-Image becomes much more interesting when palette discipline matters more than random beauty.

6. Text rendering, formulas, and layouts may be the model’s biggest differentiator

If Wan 2.7-Image holds up under dense text and formula-heavy prompts, that changes who this model is actually for.

Text rendering is where most image models start bluffing. A short sign is one thing. A page-like block of text, a structured infographic, or an academic layout full of formulas is a different problem entirely. Research on long-text image generation keeps making the same point: long-form text remains unusually hard, even for otherwise strong models.

That is why this part matters so much. It is also why it deserves skepticism. The strongest early materials around Wan 2.7-Image suggest it handles long text, formulas, and layout-heavy content better than typical image models. If that holds up in broader use, it expands the audience immediately.

Now the model is not only relevant for people making posters or ad visuals. It becomes relevant for education graphics, research explainers, diagrams, information-heavy social assets, and editorial mockups where readability matters.

It still does not replace layout software. It should not be judged that way. But it does seem to push image generation closer to a place where text-heavy visual ideation becomes genuinely usable.

Bottom line: Wan 2.7-Image may be one of the few image models worth taking seriously for text-heavy visual work.

7. Editing is one of Wan 2.7-Image’s biggest strengths — and still the place to stay cautious

Wan 2.7-Image’s editing story is compelling, but this is still the area where skepticism is healthiest.

Image editing often looks solved in demos and unstable in practice. You try to move one object, replace one logo, or swap one local element, and the rest of the image starts mutating. That is why box-based or selection-based editing matters. It follows the way real users think. Recent evaluation work also treats controllability and reference consistency as core image-editing criteria, not side metrics.

Wan 2.7-Image appears to do well here, especially in localized edits such as moving a subject inside a marked region or replacing one selected area without rewriting the entire frame. That is a strong signal. But it is not the same as “editing is solved.” Early testing already points to the kind of limitation that still matters: compositing logic can slip, lighting integration can feel incomplete, and difficult multi-reference scenes can reveal weak spots around contact, grounding, or local realism.

That is also why an image-to-image workflow still matters. Good editing is rarely about one magic prompt. It is about controlled iteration.

Bottom line: Wan 2.7-Image makes editing feel more direct, but hard edits still belong in the “promising, not fully solved” category.

8. Best use cases — and who should probably skip it

Wan 2.7-Image is not for everyone, but it looks unusually strong for image tasks that depend on fidelity, consistency, and editability.

The clearest fits are designers building posters and branded visuals, e-commerce and marketing teams producing repeatable product or model variations, and educators or researchers making explainers, diagrams, and formula-heavy image assets. In all of those cases, image generation is only part of the job. The real need is controllable output that can survive revision.

It looks less essential for creators who mostly want one-off social images. It also may not be the first pick for highly stylized exploration where surprise matters more than control. If your main work is layout-first print production, decks, or materials that need exact manual typography and composition, traditional design tools still make more sense for the final mile.

That is not a weakness. It is a boundary. Good reviews should name those.

Bottom line: Wan 2.7-Image is best when you need controllable image assets, not when you just want visual serendipity.

9. Where Wan 2.7-Image fits in a real image workflow

Wan 2.7-Image makes the most sense when generation is only the beginning of the image task, not the end of it.

The strongest use case is not random prompting for a single attractive frame. It is building an image asset that still needs refinement, adaptation, or controlled reuse. That includes campaign visuals, posters, e-commerce variations, educational graphics, and reference-based compositions.

This is where Wan 2.7-Image looks more useful than many models that are good at visual surprise but weaker at controlled follow-up work. Once the base image is right, teams can continue refining outputs through a more controlled editing process or expand that base into a broader static asset pipeline.

This is also the right place for GoEnhance in the article. Not as proof that Wan 2.7-Image is strong, but as the place a reader can continue exploring image-generation and image-editing workflows once the review has defined what matters.

Bottom line: Wan 2.7-Image is most valuable when the job continues into image refinement and controlled reuse.

10. FAQ

The real decision is not whether Wan 2.7-Image is interesting, but whether it fits the kind of image work you actually do.

Is Wan 2.7-Image good for professional design work?

Yes, especially if “design work” means controlled image generation, editable campaign assets, reference-based palette work, or text-heavy visuals in early production. No, if you mean final manual layout polish.

Is Wan 2.7-Image better at text rendering than typical image models?

Early evidence suggests yes, especially on long text and formula-heavy layouts, though this is still something serious users should verify with their own scenarios.

Can Wan 2.7-Image keep characters consistent across multiple images?

That appears to be one of its strongest areas, particularly in group scenes and targeted identity edits, though consistency should still be tested case by case.

Is Wan 2.7-Image worth using for e-commerce and marketing visuals?

Yes. That is one of the clearest fits because color consistency, repeatable edits, and product or talent variation all matter there.

What is Wan 2.7-Image still weak at?

Hard editing still deserves caution. Some compositing details, lighting integration, and difficult localized edits can still look off.

Bottom line: The best reason to care about Wan 2.7-Image is not hype. It is that a few painful image-production tasks may become less random.

11. Final verdict

Wan 2.7-Image looks like one of the first image models in this cycle that deserves attention for controllable visual production, not just prettier samples.

Its value is clearest when the job needs multiple distinct faces, cleaner palette control, real text handling, and edits that stay closer to the requested region. It does not replace layout software, and editing should not be treated as fully reliable yet. That limitation should be stated clearly.

If your workflow is primarily layout-based — flyers, branded slide decks, print collateral — traditional design tools are still the better tools for that specific job. But if you want controllable image generation that can hold up across editing, reuse, and structured visual work, Wan 2.7-Image is a model worth paying attention to.